Waypoint Models for Instruction-guided

Navigation in Continuous Environments

Navigation in Continuous Environments

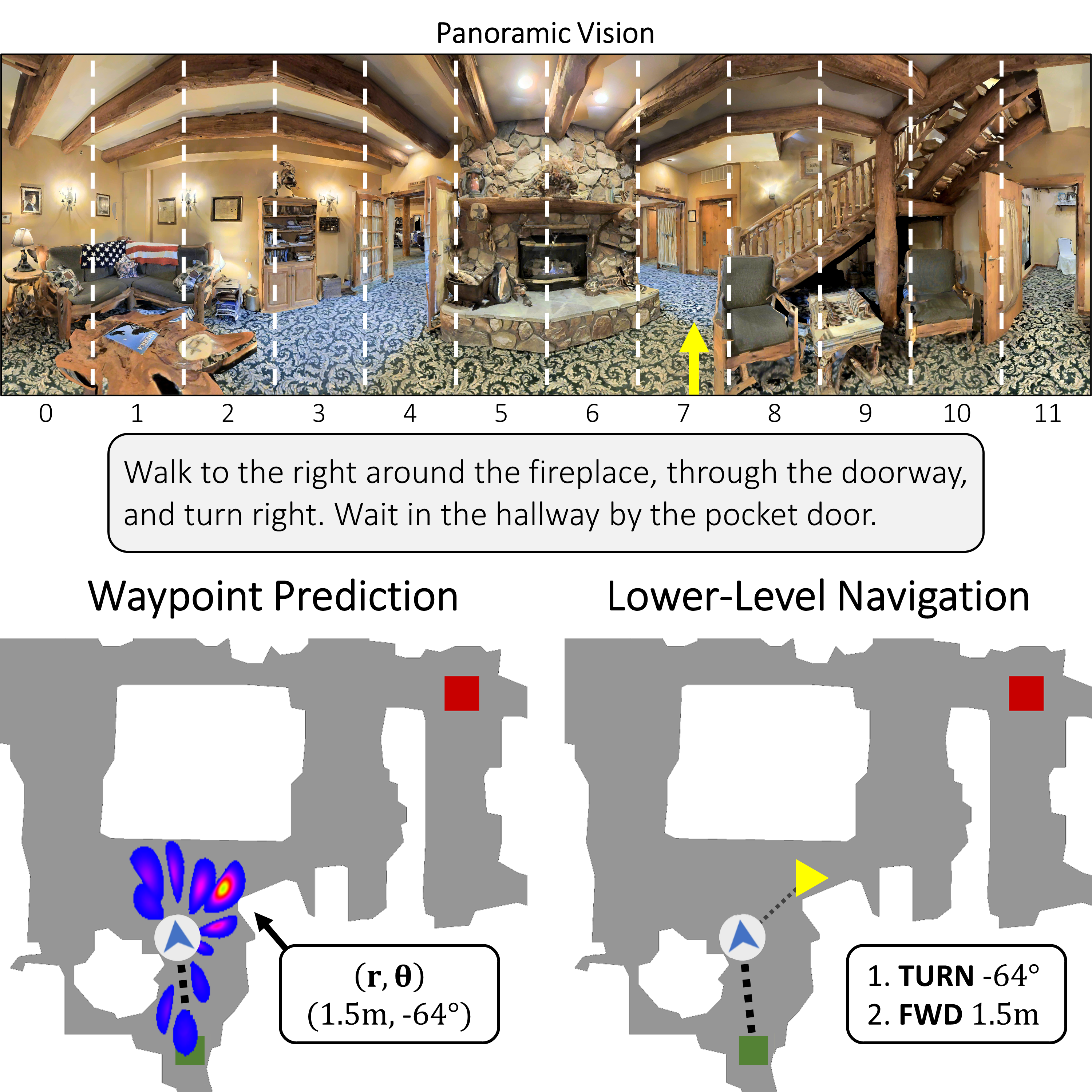

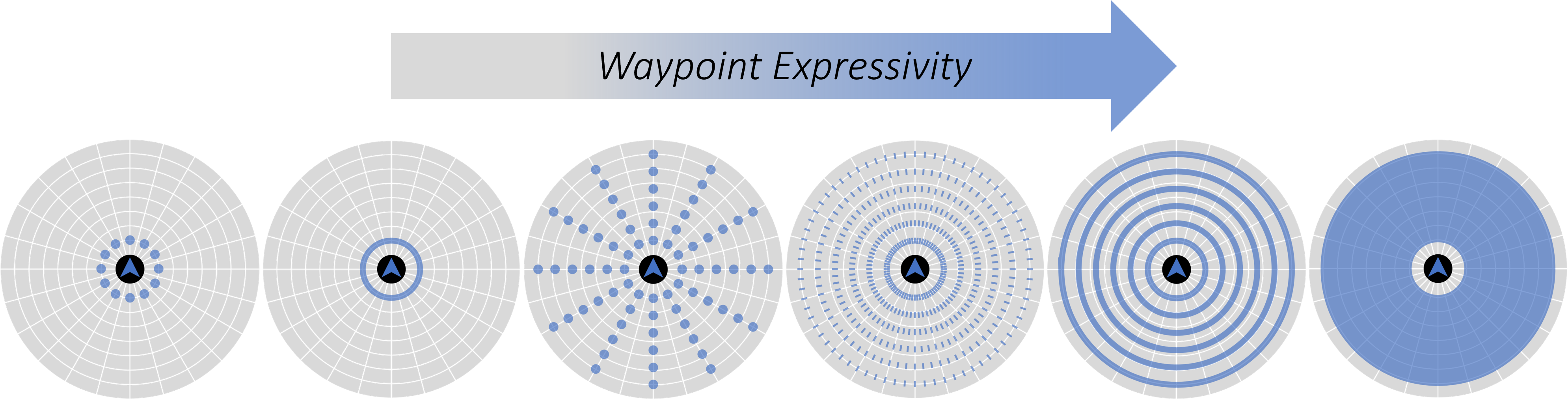

Little inquiry has explicitly addressed the role of action spaces in language-guided visual navigation – either in terms of its effect on navigation success or the efficiency with which a robotic agent could execute the resulting trajectory. Building on the recently released VLN-CE setting for instruction following in continuous environments, we develop a class of language-conditioned waypoint prediction networks to examine this question. We vary the expressivity of these models to explore a spectrum between low-level actions and continuous waypoint prediction. We measure task performance and estimated execution time on a profiled LoCoBot robot. We find more expressive models result in simpler, faster to execute trajectories, but lower-level actions can achieve better navigation metrics by better approximating shortest paths. Further, our models outperform prior work in VLN-CE and set a new state-of-the-art on the public leaderboard – increasing success rate by 4% with our best model on this challenging task.

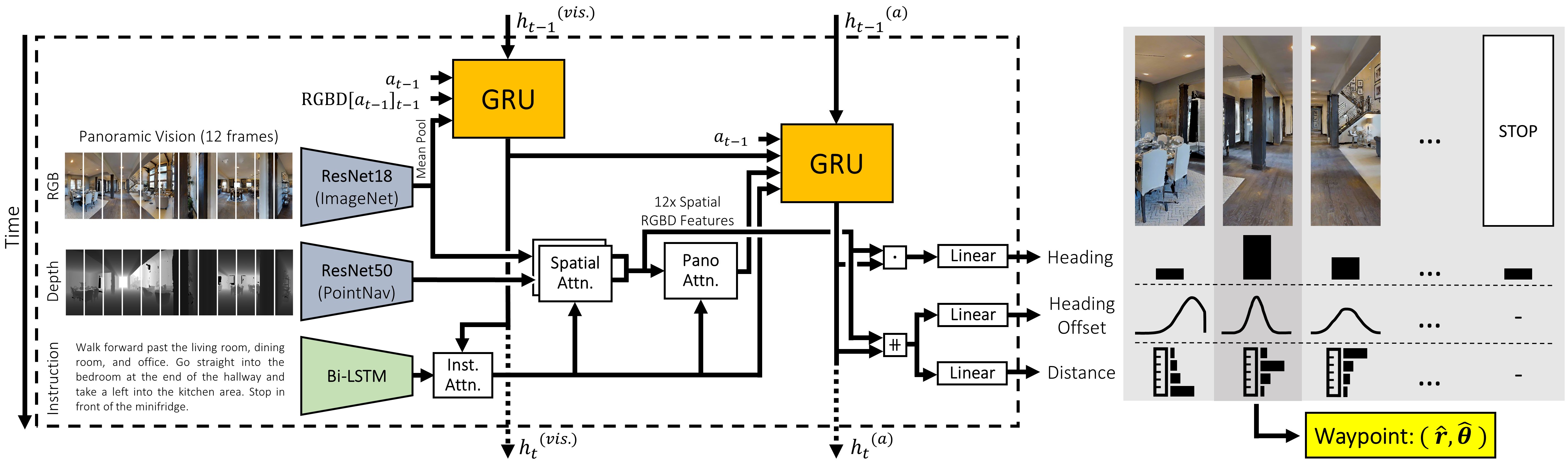

We develop a waypoint prediction network (WPN) that predicts relative waypoints directly from natural language instructions and panoramic vision. Our WPN uses two levels of cross-modal attention and prediction refinement to align vision with actions.

We train the above WPN model with several different actions spaces, ranging from least expressive (12-option heading prediction), to most expressive (continuous-valued waypoint prediction up to 2.75m away). Generally, we find that less expressive action spaces lead to small improvements in standard metrics over more expressive versions but result in trajectories that would be slower to execute on real agents due to frequent stops and turns.

Paper

Waypoint Models for Instruction-guided Navigation in Continuous Environments

Presentation Video

Demonstration Video

Authors

Acknowledgements

We would like to thank Naoki Yokoyama for helping adapt SCT and Joanne Truong for help with physical LoCoBot profiling. This work is funded in part by DARPA MCS.