Sim-2-Sim Transfer for Vision-and-Language

Navigation in Continuous Environments

Navigation in Continuous Environments

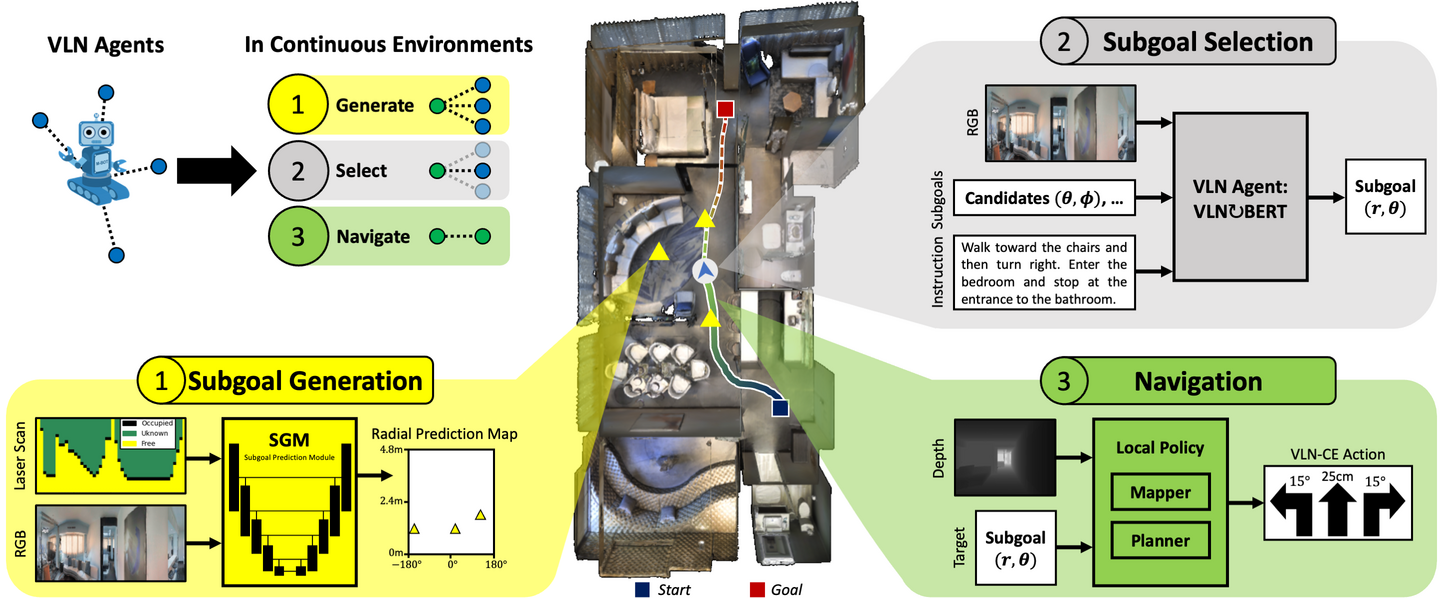

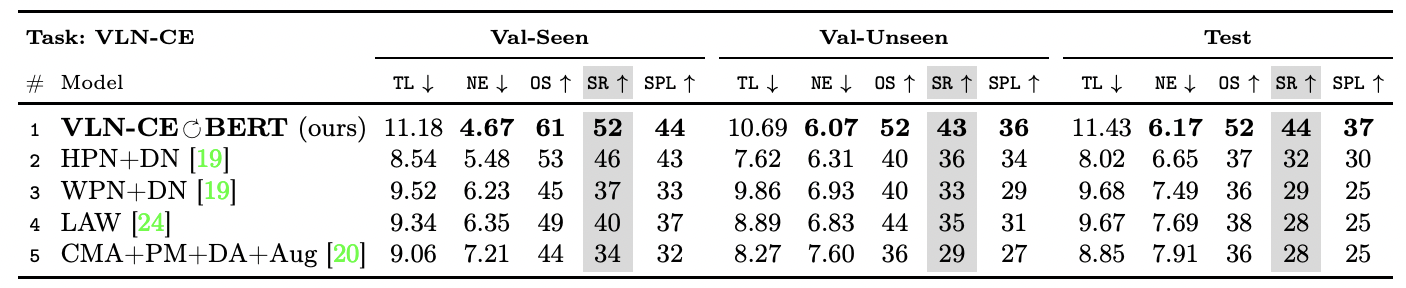

Recent work in Vision-and-Language Navigation (VLN) has presented two environmental paradigms with differing realism – the standard VLN setting built on topological environments where navigation is abstracted away, and the VLN-CE setting where agents must navigate continuous 3D environments using low-level actions. Despite sharing the high-level task and even the underlying instruction-path data, performance on VLN-CE lags behind VLN significantly. In this work, we explore this gap by transferring an agent from the abstract environment of VLN to the continuous environment of VLN-CE. We find that this sim-2-sim transfer is highly effective, improving over the prior state of the art in VLN-CE by +12% success rate. While this demonstrates the potential for this direction, the transfer does not fully retain the original performance of the agent in the abstract setting. We present a sequence of experiments to identify what differences result in performance degradation, providing clear directions for further improvement.

We adopt the Recurrent-VLN-Bert model that performs well on the discrete VLN task (step 2 above). Around this model, we deploy a harness enabling continuous-environment navigation: a subgoal generation module (step 1) and lower-level navigation (step 3). These modules mimic the VLN action space that Recurrent-VLN-Bert was trained on.

We analyze this model's performance in intermediate transfer settings between discrete and continuous environments. We find a performance gap that can be atrributed to:

- A visual domain gap (Matterport images vs. reconstruction renders)

- A navigation gap (teleportation vs. lower-level control)

- A subgoal candidate gap (known-graph waypoints vs. inferred waypoints)

We hope the sim-2-sim analysis in this paper is not only useful for developing performant agents in realistic simulation, but can inform the transfer of instruction-following agents to the real world.

Paper

Short Presentation

Demos

Related Projects

Acknowledgements

This work was supported in part by the DARPA Machine Common Sense program. The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of the U.S. Government, or any sponsor.

Email — krantzja@oregonstate.edu